I’ve been neglecting this blog of late, partly because I’ve been ill and partly because I’ve been focusing my writing efforts elsewhere, but thought it was due time I put something up. Followers might remember that last year at EGU I presented a poster detailing results of investigating the variation of the greyscale input channel into Structure-from-Motion (SfM) photogrammetric blocks. Whilst the results showed very slight differences, I didn’t present one interesting, subtle effect, which shows how robust the process is to differences within images.

Within the SfM process, camera parameters which correct for distortions in the lens are fitted, which can subsequently be extracted for separate analysis. Returning to the greyscaling theme for inclusion in my final thesis, I’m pulling out the lens models for each block, and noticed the focal length being fitted to each block subtly changing, but in a manner we might expect.

Chromatic aberration

Chromatic aberration is caused by differences in the refractive indices of the glass in the lens between light of different wavelengths, which causes the focal point of the image formed for each wavelength to be slightly different. Thus, in colour images and for other optical equipment (I remember seeing it in many different sets of binoculars), we can see colour banding around the edges of high contrast features.

Chromatic aberration seen at the front (red fringe) and back (green fringe) of the candle

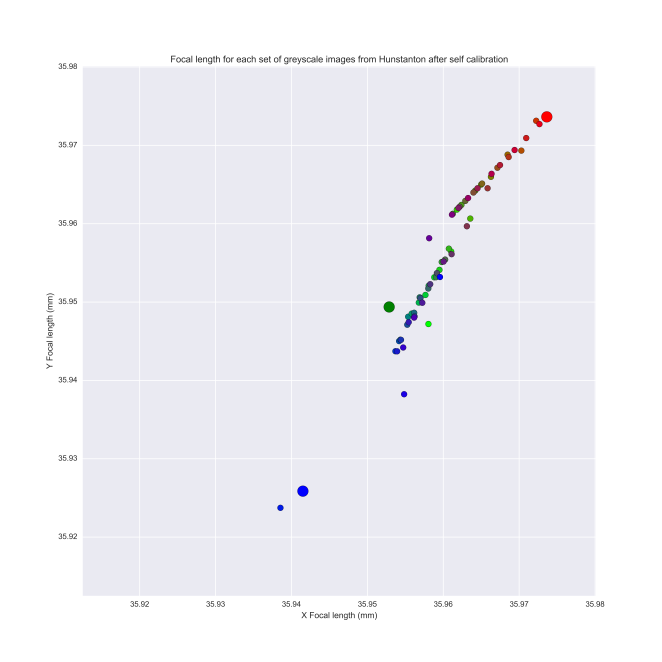

Within photogrammetric blocks using single channel, we might expect the focal length to be optimised for specifically that colour’s focal length as it interacts with the specific lens being used. Indeed, this is demonstrable in the tests I have run – we see a slight lengthening of the focal length as more of the red channel is introduced to the image block accounting for the interaction with the lens, testing on an RGB image set collected of a cliff near Hunstanton, UK.

Self-calibrating bundle adjustment fits longer focal lengths to greyscale bands containing a greater proportion of the red channel from an RGB image. Colours of the plotted points represent the RGB colour combination the greyscale photogrammetric block was derived from. The larger circles represent pure red, green and blue channels.

Whilst this might be expected, I was surprised by how obvious a trend was being shown, and it’s testament to how sensitive SfM is at picking up even small changes in image blocks. Watch this space for more insight into what this means for assessing quality of images going into SfM procedures, and how we might gain intuition into image quality as a result of this trend!